CAD / GIS platforms must go to the GPU

Those of us who are users of graphical applications, we are always expecting that the computers have enough working memory. In this, CAD / GIS programs have always been questioned or measured based on the time it takes to perform daily activities such as:

- Spatial analysis

- Rectification and recording of images

- Deployment of bulk data

- Data management within a geodatabase

- Data service

The traditional PC has not changed much in recent years, in terms of RAM, hard disk, graphics memory and features that have only been increasing; But the CPU operation logic has maintained its original design (That's why we keep calling it CPU). It has also been a disadvantage that as teams grow in capabilities, programs kill their expectation by designing themselves to consume new potential.

As an example, (And only example), when two users are placed at the same time, in the same conditions of equipment and data, one with AutoCAD 2010 and another with Microstation V8i, loading 14 raster images, a parcel file of 8,000 properties and connection to an Oracle spatial database, we ask ourselves the question:

What does one of the two have, so as not to collapse the machine?

The answer is not in innovation, it is simply the way the program is developed, because this is not the case with AutoDesk Maya, which does crazier things and performs better. The way to exploit the PC is the same (so far in the case of the two programs), and based on this we shoot the programs, because we use them to work, and a lot. Thus, some computers are known as traditional PCs, workstations or servers; not because they are of another color, but because of the way they perform running high-consumption programs in graphic design, video processing, application development, server functions and in our case, operation with spatial data.

Less CPU, more GPU

Of the most outstanding in recent changes to the architecture of PCs is the term coined as GPU (Graphics Process Unit), which allows to find a better performance of the equipment, converting large routines into small simultaneous tasks, without going through the administration Of the CPU (Central Process Unit), whose working capacity is played between the revolutions of the hard disk, RAM memory, video memory and among other particulars (Not many others).

Graphics cards are not made to increase video memory, but rather include a processor that contains hundreds of cores designed to run parallel processes. This they have always had (about), but the current advantage is that these manufacturers offer some open architecture (almost) so that software developers can consider the existence of a card with these capabilities and exploit its potential. PC Magazine this January mentions companies such as nVidia, ATI and others included within the alliance OpenCL

To understand the difference between CPU and GPU, here I mean a simile:

CPU, all centralizedIt is like a municipality with everything centralized, which has urban planning, it knows that it must control its growth but is unable to supervise even the new constructions that are violating the norms. But instead of granting this service to private companies, he insists on assuming the role, the population does not know who to complain to about the neighbor who is taking the sidewalk, and the city continues to get more disordered every day.

Sorry, I was not talking about your mayor, I was just talking about a CPU simile, where this Central Process Unit (in case of Windows) should make the team perform in processes like:

- Programs that run when Windows starts, such as Skype, Yahoo Messenger, Antivirus, Java Engine, etc. All consuming a part of the working memory with a low priority but unnecessarily unless modified by msconfig (which some ignore).

- Services that are running, that are part of Windows, programs of common use, connected hardware or others that were uninstalled but remain there running. These usually have a medium / high priority.

- Programs in use, which consume space with high priority. We feel their speed of execution in our liver because we curse if they don't do it fast despite having a high-performance team.

And although Windows does its juggling, practices like having many programs open, installing or uninstalling irresponsibly, unnecessary issues that come Pintones, Make us ourselves are guilty of the malfunction of the team.

It happens then, that when we start a process of the mentioned ones at the beginning, the processor is Coconut breaks seeking to prioritize this over the other programs in use. Your few options to optimize are the RAM memory, video memory (which is often shared), if there is a graphics card, get something out of it, depending on the type of hard drive and other trifles, the pitiful whine could be less.

GPU, parallel processes, It is like the municipality deciding to decentralize, concession or privatize those things that are out of its reach, which, although they are large processes, are delivered in small tasks. Thus, based on current regulations, a private company is given the role of specifically monitoring punishable violations. As a result (Just example), The citizen can fulfill that delightful pleasure of telling the ribs to the neighbor that takes the dog to Shit on his sidewalk, who builds a wall by taking part of the sidewalk, who parks his car improperly, etc. The company answers the call, goes to the place, processes the action, takes it to court, executes the fine, half goes to the municipality, the other is a profitable business.

This is how the GPU works, the programs can be designed so that they do not send massive processes in a conventional way, but they go in parallel like small filtered routines. Oh! wonderful!

So far, not many programs are making their applications with these features. Most of them, they aspire to reach 64 bits to solve their slowness problems, although we all know that Don Bill Gates is always going to walk in those capacities by loading unnecessary things on the next versions of Windows. Windows' strategy includes taking advantage of the GPU through APIs designed to work on DirectX 11, which will surely be an alternative that everyone (or most) will accept because they will prefer it as a standard instead of doing crazy things for each brand outside of OpenCL.

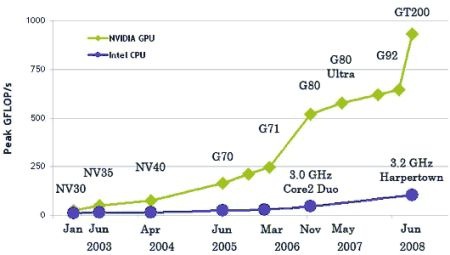

The graph shows an example, which shows how between 2003 and 2008 the nVidia processor via GPU has been revolutionizing its capabilities compared to the Intel CPU. Also the Smoked explanation Of the difference.

But the potential of the GPU is there, hopefully and CAD / GIS programs get the necessary juice. It has already been heard, although the most outstanding case is the d

e Manifold GIS, with CUDA cards, from nVidia, in which a digital terrain model generation process that took more than 6 minutes was executed in just 11 seconds, taking advantage of the existence of a CUDA card. Smoked what made them To win the Geotech 2008.

In conclusion: We go for the GPU, we will surely see a lot in the next two years.

Hello Vincent, I see that you seem to be getting used to Windows 7.

Is there anything you miss about xP?

Are there any reasons why I would not go back to XP?

Windows 7 in 64bit still allows you to install applications in 32bit ... And so far none of my GIS applications stopped working.

"By the way, have you tried Manifold on 64-bit?"

Nup…. Although my humble PC has a 64-bit AMD, I did not want to install Windows 64 as a stack of applications and drivers would be out of use. I think the step would be to have a dedicated PC and install everything in 64bits.

I have no doubt that Manifold would be one of those applications that would make a difference running under 64 bits, and would not be a mere adaptation but would take the juice (as they did with CUDA GPU technology).

Thanks for the data Gerardo. By the way, have you tried Manifold in 64 bits?

Good grade.

If you want to see the demonstration video of Manifold in which you can see the brutal processing speed of the plates with CUDA technology - which in addition, several can be installed in parallel and thus add their powers, as long as there are available slots - go to this YouTube URL :

http://www.youtube.com/watch?v=1h-jKbCFpnA

Another port for Manifold history: 1er SIG program for 64 native bits. And now, 1er SIG on using CUDA technology ..

regards